The first phase of the AI trade was about compute, but the second phase is being driven by constraints. Over the past two years, leadership was concentrated in a small group of AI chip designers and hyperscalers as demand for training and inference accelerated, which made sense in the early stages of a new technology cycle. As AI systems scale, however, the limits of the existing infrastructure are starting to show. Memory supply is tightening, storage demand is rising sharply, and electricity requirements for data centres are climbing at a pace the grid was never designed to handle1. These are not isolated developments, but early signals that AI is moving beyond a pure compute story and into a full infrastructure build-out across both semiconductors and physical assets.

Key Takeaways

- Memory shortages show AI is now constrained by infrastructure, not just compute

- Semiconductors are shifting from a cyclical tech segment to the first layer of a multi-year AI infrastructure build-out

- Power, grids and data centres are emerging as the next bottleneck in the AI cycle

Memory is the first visible pressure point

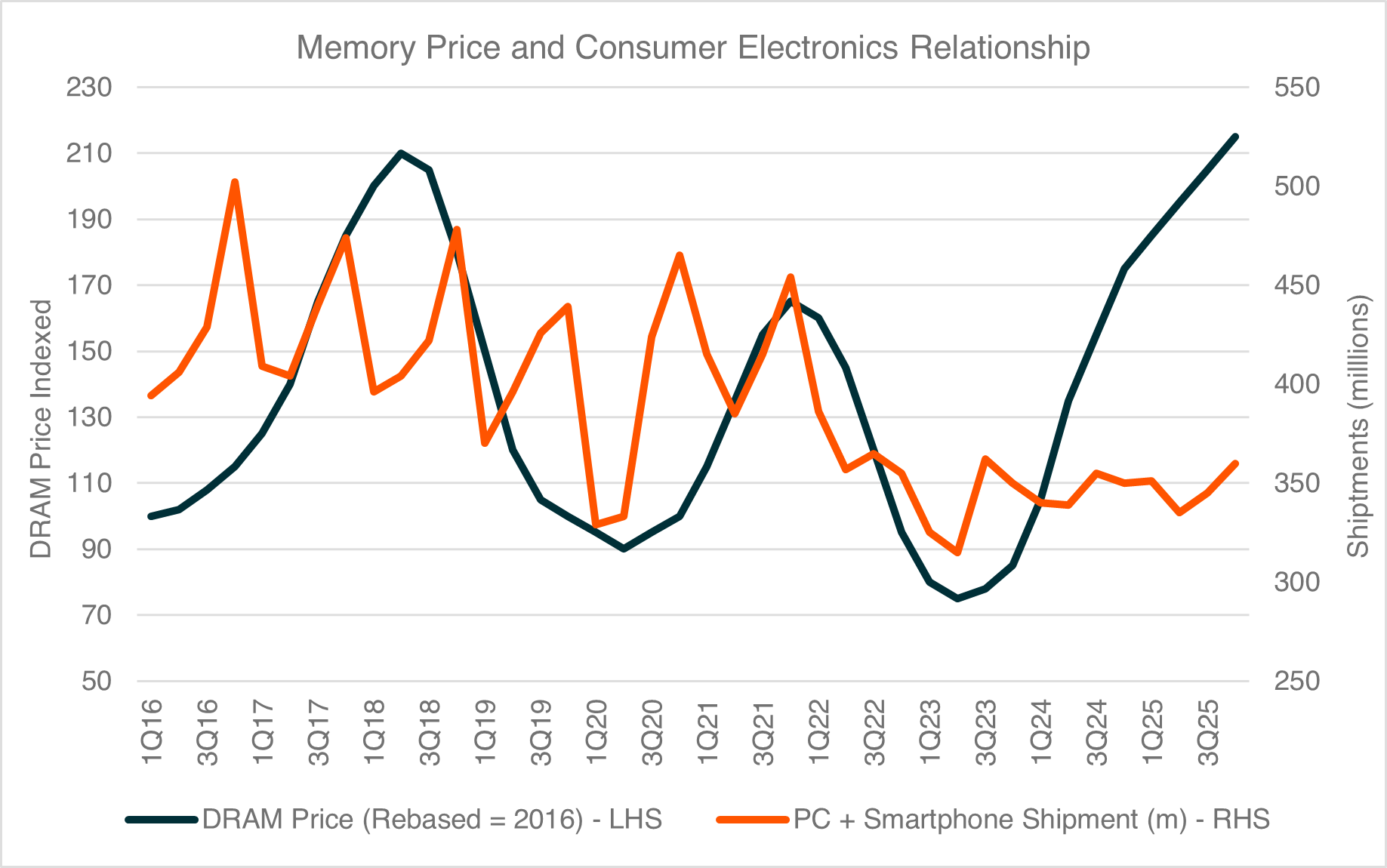

The clearest signal that the AI cycle is entering a new phase is coming from the memory market. In past cycles, memory demand was dominated by consumer devices, which made pricing highly cyclical and tied to handset and PC shipments. This time, the strongest demand is coming from AI servers, where memory and storage requirements are materially higher than in traditional workloads2 . As a result, memory pricing is starting to move independently of consumer device volumes, signalling a shift away from the old consumer-driven cycle and toward a more structural, infrastructure-led demand profile.

Source: Bank of Korea, Gartner, IDC

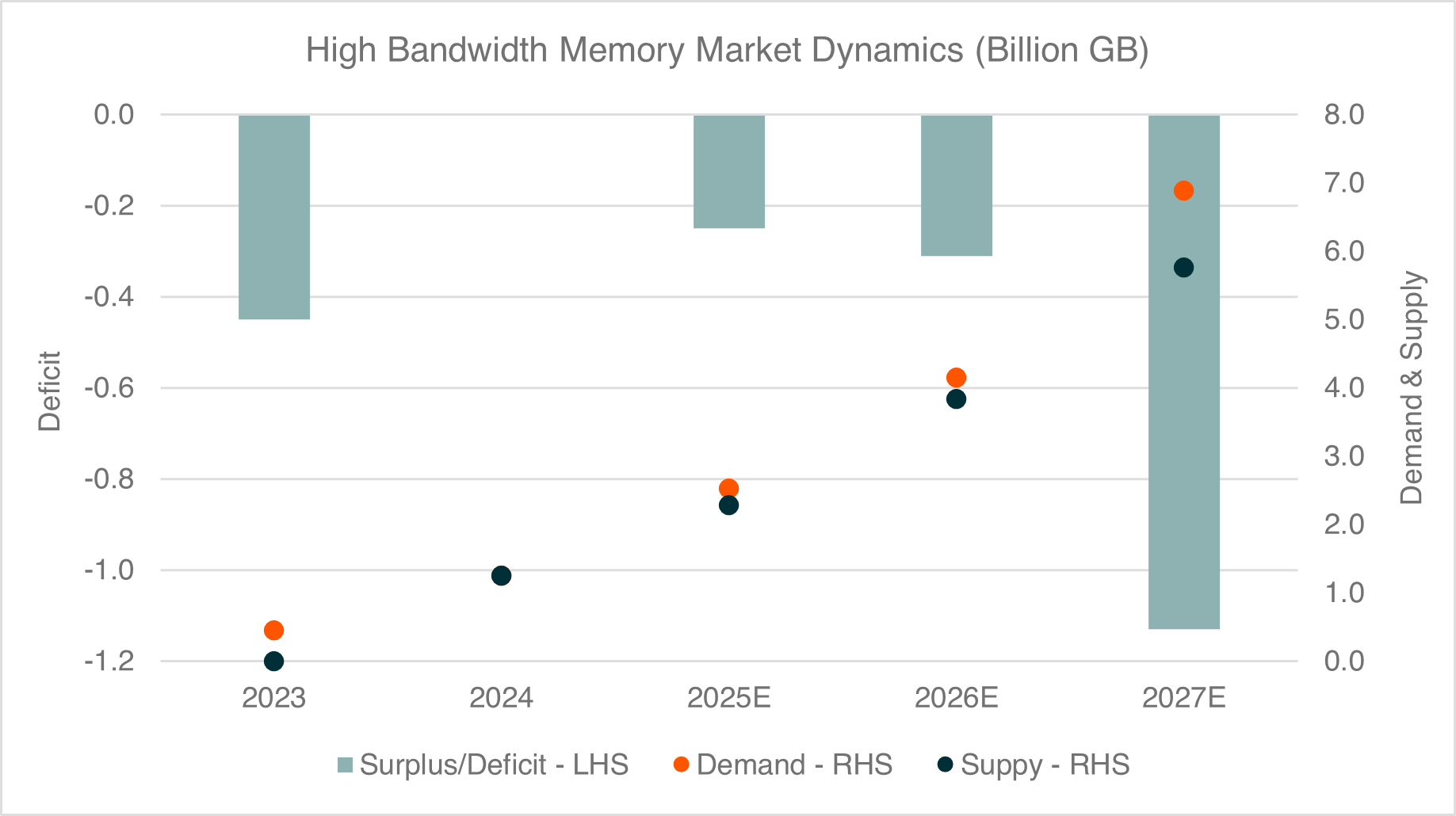

High-bandwidth memory (HBM) sits directly alongside advanced GPUs and custom accelerators, which means it scales with the complexity of the models themselves. As model sizes increase and inference becomes more widespread, memory capacity is emerging as a key limiting factor. Supply is expected to remain tight through at least 20273 , while the HBM market is projected to grow at close to an 80% annual rate over the next several years . At the same time, enterprise Solid State Drive (SSD) demand linked to AI inference is forecast to expand at roughly 50% per year, with storage requirements in AI servers growing more than twice as fast as in traditional systems5.

Pricing is already responding to these pressures. DRAM and NAND average selling prices are expected to rise by roughly 70–80% into 2026, driving a step-up in margins and earnings across memory suppliers6. The effects are not confined to the memory companies themselves. In some enterprise hardware products, memory now accounts for roughly 10–25% of the total bill of materials7, so rising DRAM prices are beginning to squeeze margins and influence build decisions. On the consumer side, memory availability has started to constrain low-end handset production as supply is increasingly prioritised toward AI and data centre customers.

Source: Micron, Bloomberg data as of 19 Feb 2026

Note: GB = gigabytes

When a single component begins to affect both enterprise margins and consumer output, it is a clear signal that the constraint is real. Memory is no longer just participating in the AI cycle but is shaping the pace and direction of the entire build-out.

Semiconductors are becoming infrastructure

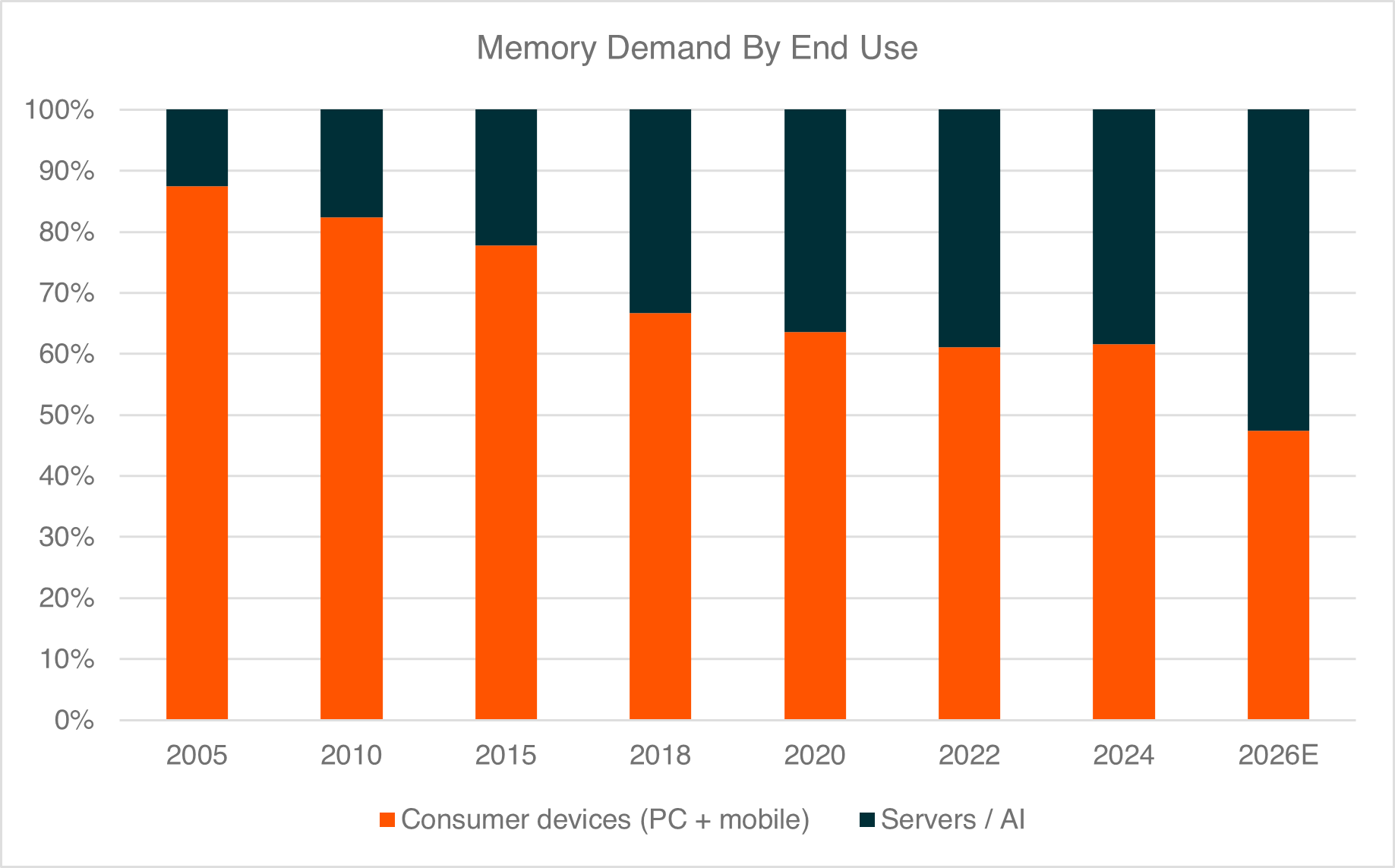

The memory shortage also highlights a broader shift across the semiconductor sector. It is no longer behaving purely like a cyclical technology segment tied to consumer demand and is increasingly taking on the characteristics of core infrastructure within the AI build-out.

Every AI system depends on a stack of semiconductor components, including compute chips, high-bandwidth memory, advanced packaging, interconnects, and storage. As AI demand accelerates, pressure moves through each of these layers, and the opportunity is no longer confined to one company or one segment. Instead, it is spreading across the entire semiconductor supply chain.

This cycle also differs from past memory booms. Capacity growth has been more measured, while technology transitions are limiting effective supply increases. Capital intensity in NAND is expected to remain lower than in DRAM over the next few years, and revenue per unit of capacity is projected to rise into the second half of the decade. That combination supports stronger pricing and a longer earnings cycle, making semiconductors look less like a short-term consumer rebound and more like a multi-year infrastructure build-out8.

Source: Straits Research, IDC, Gartner, Bloomberg data as of 19 Feb 2026

As demand becomes more closely tied to data centre build-outs than to handset refresh cycles, the semiconductor sector begins to take on the characteristics of infrastructure. Revenue streams become increasingly linked to capital expenditure cycles, and the investment horizon shifts from short consumer-led swings to longer, more structural build-outs.

Power is the next constraint

As AI systems scale, the next bottleneck moves beyond the server and into the grid. AI workloads consume far more electricity than traditional computing tasks, and data centre capacity is being expanded rapidly to meet demand.

Global investment in power infrastructure has already reached around US$1.5 trillion per year, while data centre capital expenditure is expected to approach US$3 trillion by the end of the decade. Electricity demand from data centres is projected to increase by more than 100 gigawatts over the same period, requiring new generation capacity, grid upgrades, cooling systems, and energy storage. In other words, AI is not just a technology cycle. It is an energy and industrial cycle as well9.

The same logic that drove the first phase of the AI trade is now pushing capital into physical infrastructure. As compute and memory scale, power becomes the next binding constraint. Data centres cannot operate without reliable electricity, and the scale of AI workloads is forcing utilities and grid operators to accelerate investment.

A two-layer AI infrastructure opportunity

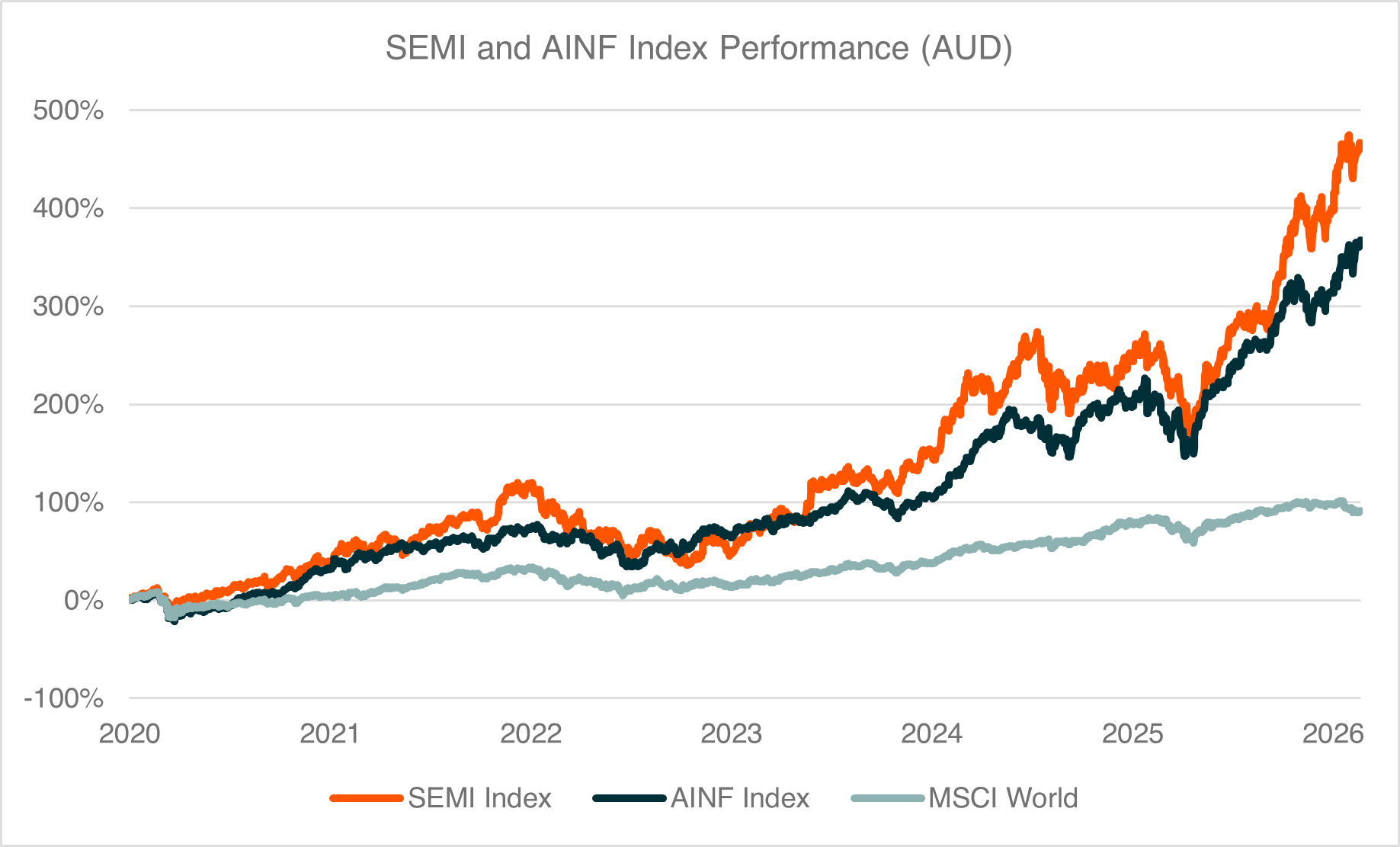

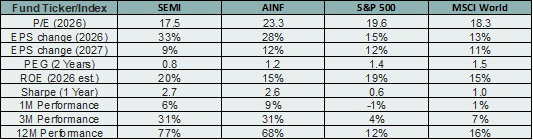

The AI build-out is now spreading across two distinct layers. The first is the digital layer, which sits within the semiconductor ecosystem and includes memory, foundries, chip designers, equipment, and advanced packaging. This is the foundation of AI compute, and the area where the first phase of the AI cycle was concentrated, represented across the semiconductor value chain in exposures such as the Global X Semiconductor ETF (SEMI).

The second is the physical layer, which allows that compute to operate at scale. This includes electricity generation, grid upgrades, data centres, cooling systems, and the broader industrial capacity required to support them. As AI workloads grow, this layer becomes just as critical as the chips themselves, which is the focus of the Global X Artificial Intelligence Infrastructure ETF (AINF).

Memory shortages are the clearest signal that the system is already under pressure. Demand is running ahead of supply across multiple parts of the stack, forcing capital to move beyond compute and into the infrastructure required to support it. For investors, the implication is that the AI opportunity is broadening. It is no longer confined to a handful of chip designers, but now spans both the semiconductor value chain and the infrastructure that powers it.

The next phase of the AI cycle sits at the intersection of semiconductors and physical infrastructure. As the bottlenecks move from compute to memory, and from memory to power, the opportunity naturally extends across both layers of the AI build-out.

Source: Bloomberg data as of 19 Feb 2026. Past performance is not an indicator of future performance.

You cannot invest directly in an index.

Source: Bloomberg data as of 19 Feb 2026. Past performance is not an indicator of future performance.